Diffusion and generative models

Developing and applying diffusion-based generative models for image restoration, conditional inference, and scientific applications.

Generative models and Bayesian inference are key tools for accelerating scientific discovery by enabling efficient exploration of large design spaces. We work on both foundational aspects of these models and their application to scientific and engineering problems.

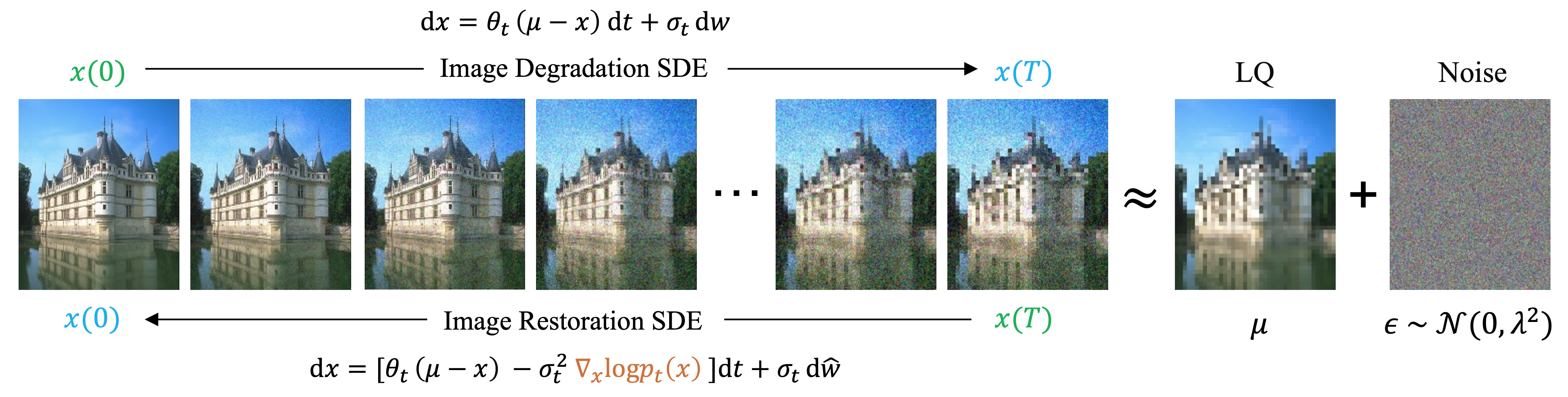

Image restoration with mean-reverting SDEs

Our work on diffusion models for image restoration introduced a mean-reverting stochastic differential equation (SDE) that transforms a high-quality image into a degraded counterpart as a mean state with fixed Gaussian noise. By simulating the corresponding reverse-time SDE, we restore the original image without relying on any task-specific prior knowledge. This approach has a closed-form solution, allowing exact computation of the time-dependent score. The method achieves highly competitive performance on deraining, deblurring, denoising, super-resolution, inpainting, and dehazing, and has been widely adopted since its publication at ICML 2023.

The forward SDE degrades a clean image towards a noisy low-quality mean; the learned reverse-time SDE restores it.

IR-SDE progressively removes rain streaks via the reverse-time SDE.

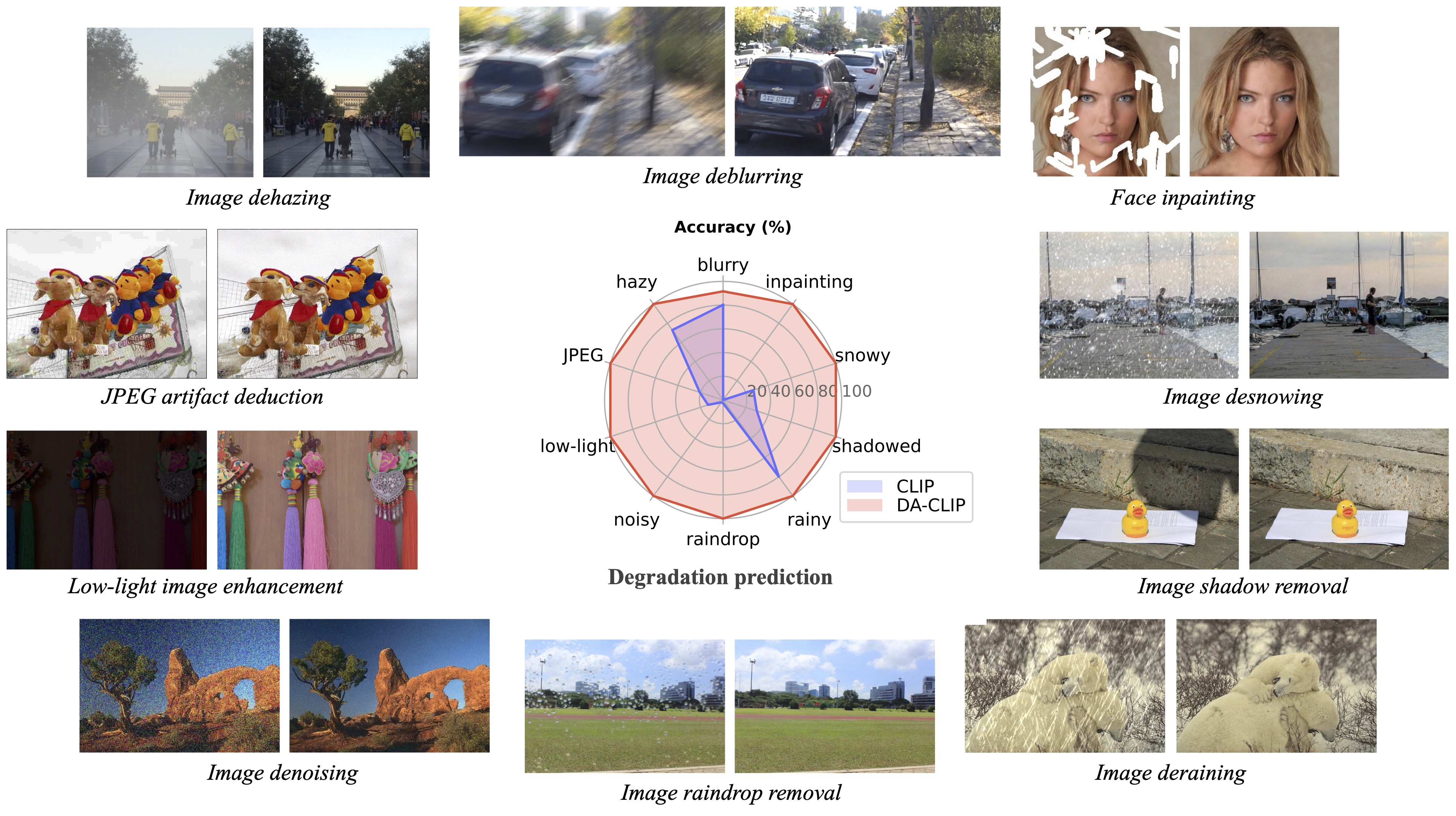

In a follow-up, we showed how vision-language models can be steered for universal image restoration across ten different degradation types, using degradation-aware CLIP embeddings to guide the restoration process.

DA-CLIP handles ten different image degradation types with a single model, using degradation-aware CLIP embeddings.

- Luo Z, Gustafsson F K, Zhao Z, Sjölund J, and Schön T B. Image restoration with mean-reverting stochastic differential equations. ICML (2023).

- Luo Z, Gustafsson F K, Zhao Z, Sjölund J, and Schön T B. Controlling vision-language models for universal image restoration. ICLR (2024).

- Luo Z, Gustafsson F K, Sjölund J, and Schön T B. Forward-only diffusion probabilistic models. arXiv (2025).

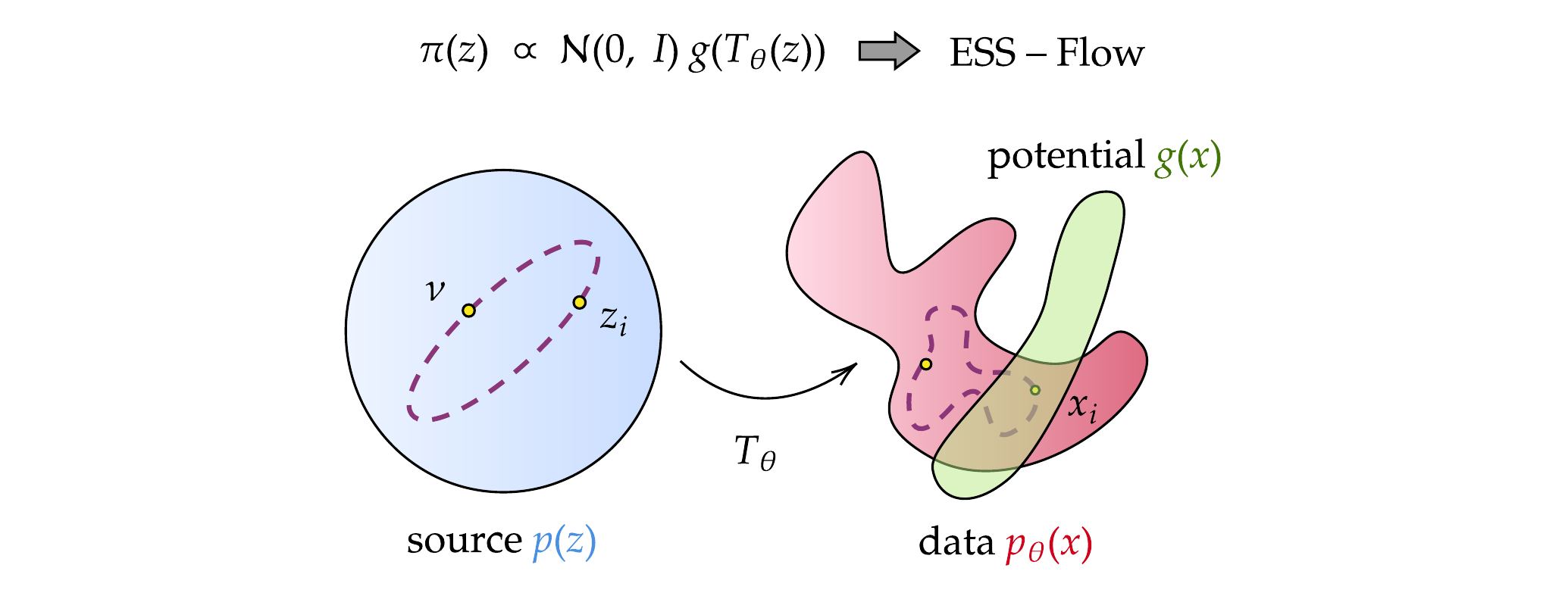

Training-free conditional inference

A key challenge for incorporating pretrained generative models into Bayesian experimental design loops is performing conditional inference without costly retraining. We are developing methods for training-free guidance of flow-based and diffusion models, enabling conditional sampling from pretrained models by leveraging the source-space structure.

ESS-Flow performs conditional inference by applying a potential function in the source space of a flow model, avoiding costly retraining.

- Kalaivanan A, Zhao Z, Sjölund J, and Lindsten F. ESS-Flow: Training-free guidance of flow-based models as inference in source space. arXiv (2025).

- Corenflos A, Zhao Z, Särkkä S, Sjölund J, and Schön T B. Conditioning diffusion models by explicit forward-backward bridging. AISTATS (2025).

Reviews and broader contributions

We have contributed two authoritative reviews to Philosophical Transactions of the Royal Society A, covering conditional sampling within generative diffusion models and the state of the art in diffusion-based image restoration. On the reinforcement learning side, we have shown how diffusion models can serve as expressive policy representations for offline RL.

- Zhao Z, Luo Z, Sjölund J, and Schön T B. Conditional sampling within generative diffusion models. Phil. Trans. R. Soc. A (2025).

- Zhao Z, Luo Z, Sjölund J, and Schön T B. Taming diffusion models for image restoration: a review. Phil. Trans. R. Soc. A (2025).

- Zhang R, Luo Z, Chen Z, Sjölund J, and Schön T B. Entropy-regularized diffusion policy with Q-ensembles for offline reinforcement learning. NeurIPS (2024).